AI_SI Piano stands for a midi trigger device based on a touchpad system found in any laptop computer – brought to a form of an oversimplified instrument that acts as piano. It was a fast idea from a recent real piece of a dream of mine – in dream i would just bang with my finger on a touchpad – and this sounded as a “veritable” piano…

So: again one of the projects that is not linked directly to some envisioned artistic output. But as an instrument it could be used on occasions – serious and less serious.

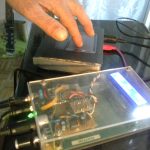

The heart of the system is (of course) arduino. Arduino Pro micro can be set as native usb device – so it was chosen (Sparkfun Pro micro 5V 16MHz version – a Chinese clone). An old laptop (sony vaio) provided a touchpad and parts to make the instrument housing. It is pretty worn out showing the extensive use. The LCD screen was long gone and at some point it was used as a local server. I say: Thank you!

Basic conceptual rule: Touch is a trigger

A touch on touchpad would always be a trigger – no playing on it’s own. In this sense the instrument should be fairly classic. It needs to be played… to play back.

AI (“artificial intelligence”) mode

Since I am entering a bit in the AI (artificial intelligence) world, this seemed like a not a very bad idea to give it a try. The AI here is not meant as using one of the AI algorithms but anything that is able to make “the right note” – based on previous notes progression and previous dynamics of playing. Various tonal scales would be taken in consideration, etc. Random will still be very helpful. Basically, AI is not AI – but more of a system based on recent event statistics – combined with some predictable behaviors on the level of what would (most likely) be the next note selected.

At this point I am at a relatively basic level: the touchpad system is behaving correctly. Touchpad data – including left and right (mouse) buttons – is a so-called PS/2 data bus. It is received by arduino pro micro and translated into midi data. Some additional ideas were added: left button stops the last note – it acts as a kind of piano pedal to mute the sound. Otherwise it is in sustain mode. The right button acts as chord player. Chords are not too much of a separate line of thought than the tonal intervals.

SI (“standard intelligence”) mode

In SI mode a touch on a touchpad triggers a note based on some scale. It is not yet clear what – apart from random – would define the scale. An LCD with rotary encoder seems just too much: we are not building a piano – or any kind of usual controllable instrument – except on the level of triggering: a touch should always output a tone/ note – but the tonal value is not to be defined by the person playing. Of course: the note / tone / chord should relate to some musical conventions – at some points even transgressing conventions – but it should force the person playing to accept the note selected or chord constructed by the machine – and adapt to it. But this is AI stuff again.

Back to SI

Switchable SI mode is not very demanding: it still provides control of note/ tone from the position of finger on the touchpad. Data from touchpad has X and Y detection (diagonally from the bottom-left to the top-right). Forced half tones could maybe be triggered by going a trifle above diagonal line – or below) – but this is yet to be done. Some force detection is also provided by the touchpad – the measure of detection is the area of the finger touching. This is then non-linearly added to the midi velocity.

A slide of fingertip on the pad produces a sequence of notes/ tones. Resolution here is matter of carefully setting the threshold values in arduino sketch. Sliding action is directly conflicting with the necessity to block the “debouncing” when touched (which produces double or multiple tones on slower touch). Another filtering deals with multi-finger touch – this old touchpad is capacitive (this is good…) – but not able to do multi-touch. So: a quick succession of three fingers tapping on the pad should result in effortless playing of the AI_SI piano.

Build-up

The SI mode is mostly fulfilled with the instrument shown below. It is running a self-made stand-alone Yamaha DB-50XG midi daughter board (modern size-reduced versions can be obtained quite cheaply…). Of course, it can run on any pc using native general midi instruments. Link to my DB50XG project…

I used:

* Sparkfun Arduino Pro Micro 16MHz 5V (https://www.sparkfun.com/products/12640 or Chinese clone)

* https://learn.sparkfun.com/tutorials/pro-micro–fio-v3-hookup-guide/troubleshooting-and-faq

* USB midi and DIN midi out

* touchpad used is T1004 capacitive sensing ASIC designed by Synaptics

* http://sparktronics.blogspot.com/2008/05/synaptics-t1004-based-touchpad-to-ps2.html

* https://www.hackster.io/BuildItDR/arduino-controlled-usb-trackpad-f443a6

Flat semi-transparent orange-brown foil cable is the ps/2 connection. I desoldered it’s original connector from the vaio motherboard and reused it on perfo-board. Below promicro is location for eeprom. Eeprom is to be used in AI mode – to retain some memory of the past dynamics.

Troubles encountered:

* in early stage the usb midi was not sent – solution was to trigger some sending in arduino setup() function

* arduino board still freezes after some time – especially when used with usb midi

* very large latency and slow response on DIN MIDI OUT when used in parallel with usb midi

* partial solution: do not use both at the same time: switch between DIN MIDI OUT and usb midi

Arduino sketch (rename .txt extension to .ino; uses ps2 and MIDIUSB arduino libraries) – version 0.2

The last photo shows the DreamBlaster S2 General MIDI Synth purchased here: https://www.serdashop.com/waveblaster

A stereo audio output was added – so that the device is now a self-contained “piano”.

Work is now carried out on adding the AI part on the level of three arduino libraries: midimelodics, midirhythmics and midistatistics. Not yet finished.

to be continued

my RSS

my RSS